Zorba with Vera Chiodi, Associate Professor at Sorbonne University Paris

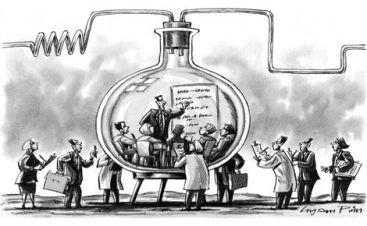

Paris.- How good is our knowledge about the effectiveness of international development programs? How can we improve it? Vera Chiodi, Associate Professor at the Sorbonne University of Paris, guided us last Tuesday through the key current issues on impact evaluation and the methodologies that have been developed so far, Randomized Control Trials among them.

Paris.- How good is our knowledge about the effectiveness of international development programs? How can we improve it? Vera Chiodi, Associate Professor at the Sorbonne University of Paris, guided us last Tuesday through the key current issues on impact evaluation and the methodologies that have been developed so far, Randomized Control Trials among them.

First of all, our data to predict poverty is not always that strong. GDP can be a strong predictor in some cases like Sweden –ranking similarly whether measured by GDP or by HDI indicators, 13 and 12 respectively–, but completely fails in the case of South Africa –which would rank 84 globally if measured by GDP, but 118 if measured by HDI. More importantly, we do not have a strong agreement on what is the impact of development programs. Despite the UN’s mandate since 1970 for countries to assign 0.7% of GDP to international aid, it is not clear if much has been achieved. Some are quite pessimist, like Bill Easterly, famous for his books The White Man’s Burden or The Elusive Quest for Growth. Maybe we should stop aid. On the other side of the spectrum, optimists like Jeffrey Sachs believe that the silver bullet for development is precisely to inject substantial amounts of money to help communities escape from the poverty trap they are stuck in.

This background explains why impact evaluation has become so important in the field of development. We should not confuse “impact evaluation” with “monitoring & evaluation”. The latter is a more descriptive approach and focuses on checking that a program took place as expected, process requirements were met etc. The former tries to identify the difference between what happened with a program (observed) and what would have happened without it (the counterfactual). Though impossible to have a perfect comparison, the art of impact evaluation is to reconstruct the counterfactual, but it is difficult to do so.

Several methodologies have been developed to measure impact, such as comparing “with and without”, “before and after”, the combination of both which measures “difference in difference”, or “statistical matching”. But most of these methodologies are based on the assumption that the world would not change without the intervention that is being analyzed; quite unrealistic. Other methodologies have made more sophisticated attempts at isolating the real impact of a program, such as “Regression Discontinuity Design” and “Randomized Control Trials”. These are becoming common tools to assess and redesign international development programs, and even domestic interventions. One of the questions raised in the debate was whether RCTs could have unethical consequences, since by definition you are providing some aid hypothetically good to one group and depriving the control group from it. But the fact is that there is always some sort of selection, so this could just be a more transparent and fair selection mechanism. Another common criticism is whether the cost of undertaking an RCT is worth paying for, but after all, isn’t the cost of replicating ineffective programs higher?

Deja un comentario